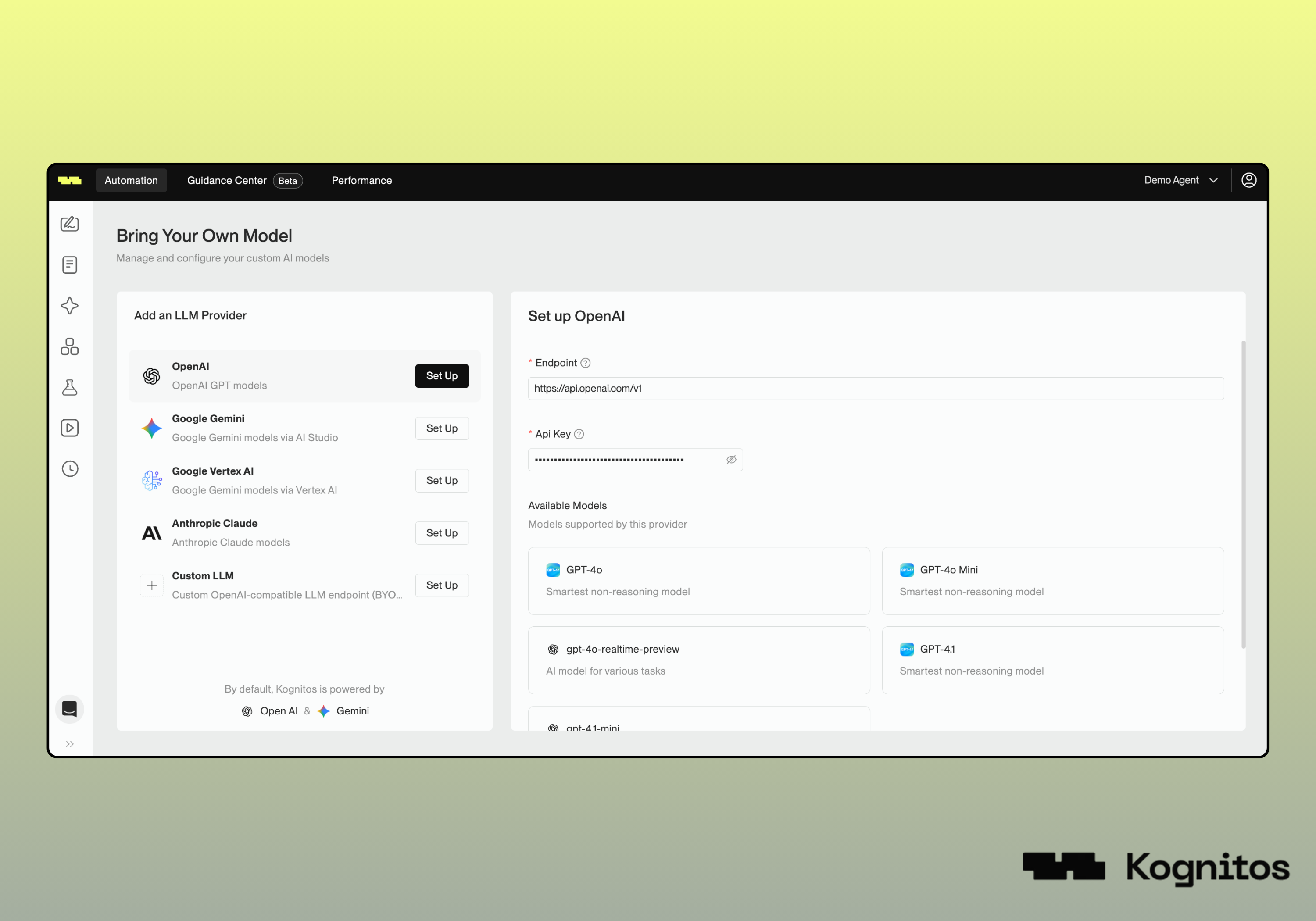

| Provider | Supported Models |

|---|---|

| OpenAI | GPT-4o, GPT-4o Mini, gpt-4o-realtime-preview, gpt-4.1-mini |

| Google Gemini | Gemini 2.5 Pro, Gemini 2.0 Flash, gemini-2.5-flash, gemini-2.5-flash-lite |

| Google Vertex AI | Gemini 2.5 Pro, Gemini 2.0 Flash, gemini-2.5-flash, gemini-2.5-flash-lite |

| Anthropic Claude | claude-opus-4, claude-sonnet-4.5, claude-haiku-4.5 |

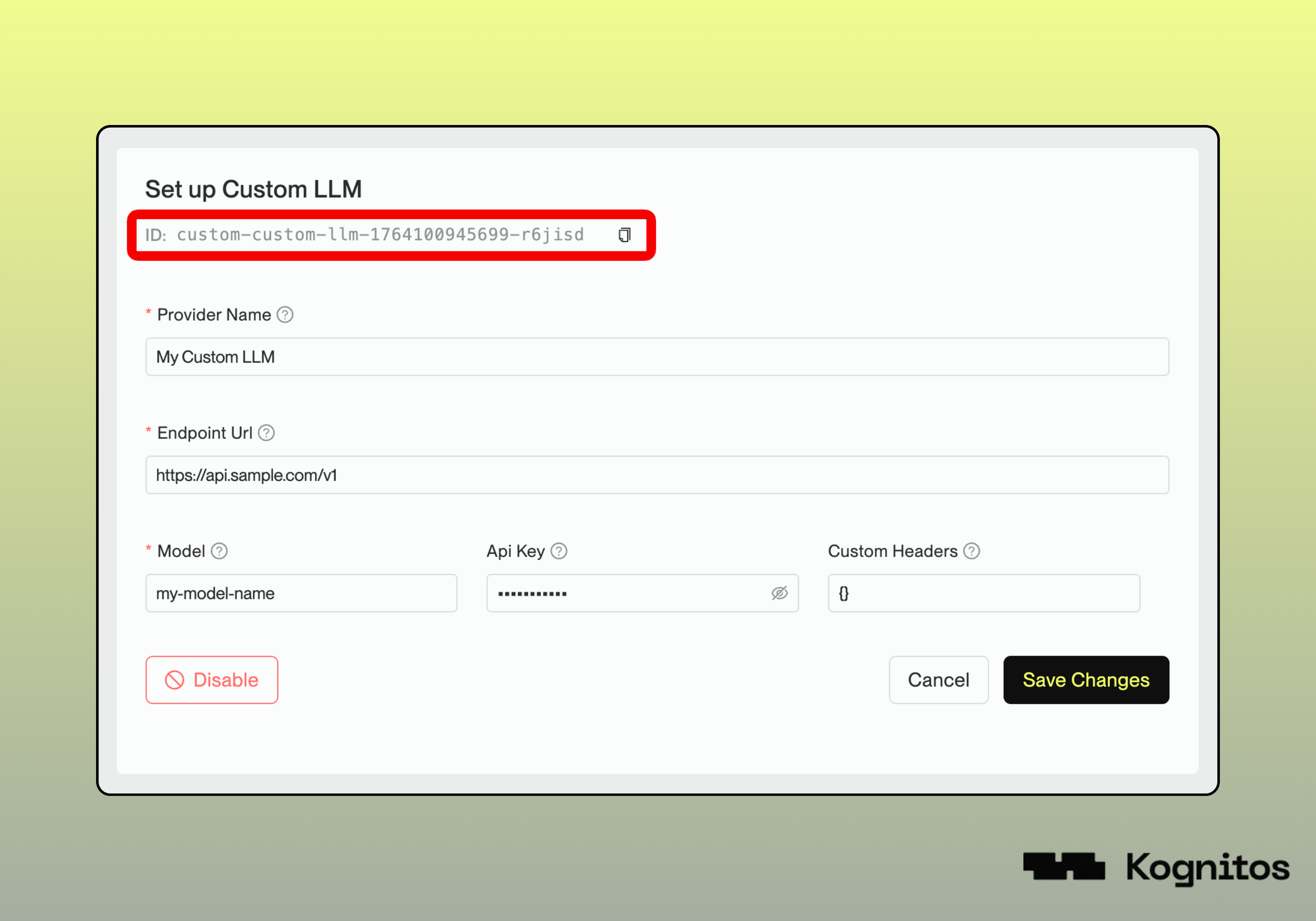

| Custom | Any custom LLM model not listed above |